As the summer break draws closer both the European Parliament and the Council are intensifying their efforts to wrap up their positions on the proposed Copyright in the Digital Single Market directive. In both legislative bodies Article 13 (the upload filters for online platforms) remains the main stumbling block and both the Bulgarian Council presidency and the EPs rapporteur (MEP Voss) have have set deadlines this week to wrap up the discussion on Article 13.

Last week (after yet another inconclusive meeting on Article 13) MEP Voss has asked the political groups to provide him their final written comments “on the MAIN and MOST IMPORTANT open issues” by Wednesday the 23rd. On the same date the Bulgarian Council presidency has scheduled an attaché meeting to discuss the latest compromise proposal.

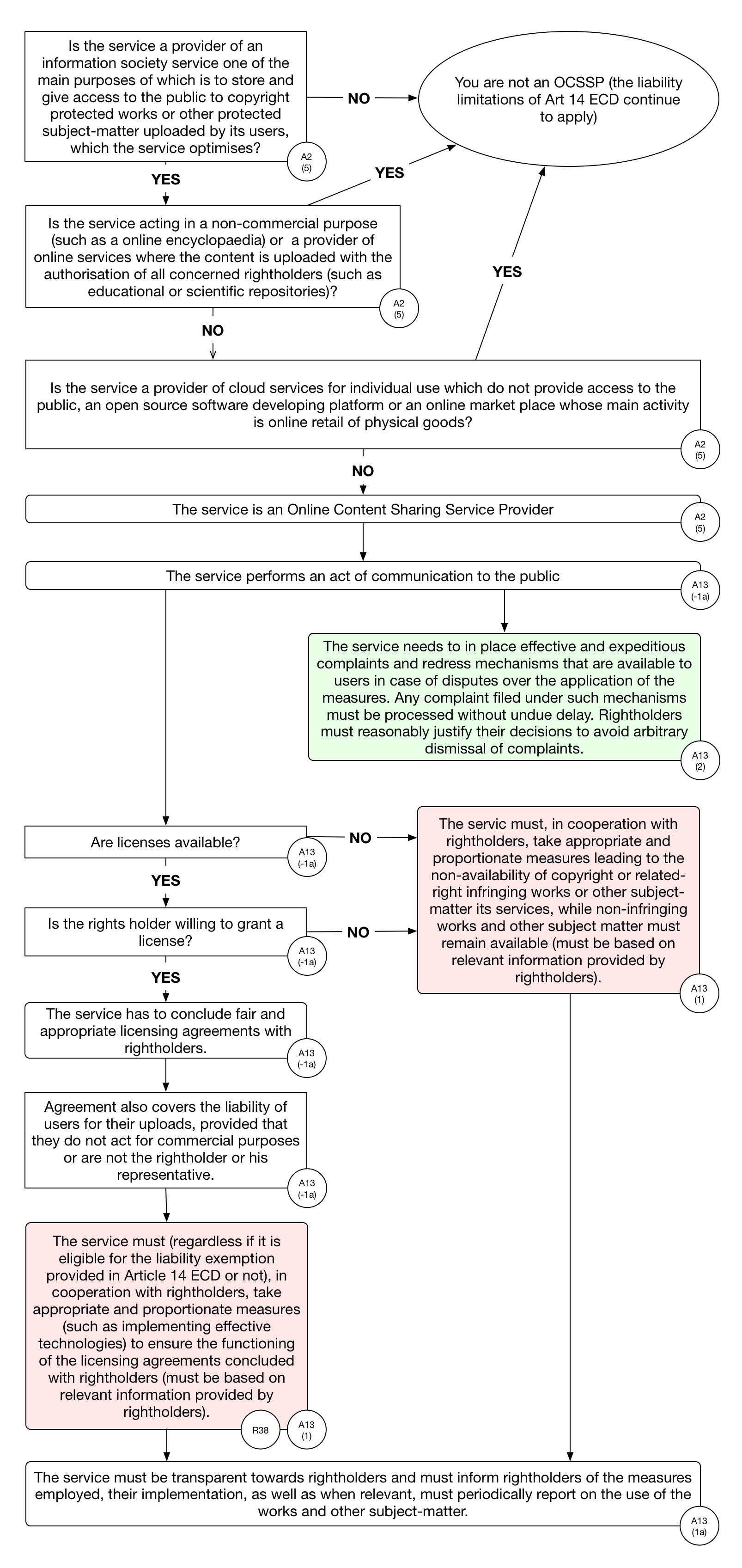

In the light of these (final?) attempts to wrap up the discussion it is important to take another look at how the discussion has evolved since the Commission published its proposal and how the 3 different versions of Article 13 compare to each other. In order to do so we have analysed the internal logic of the Commission proposal, the last Bulgarian compromise proposal and version 6 of the European Parliament’s Legal Affairs committee compromise text and depicted the most important elements in a series of flowcharts (see below). Even a casual glance at these makes it clear that both the Council’s and the Parliament’s changes to the text have resulted in vastly more complex versions.

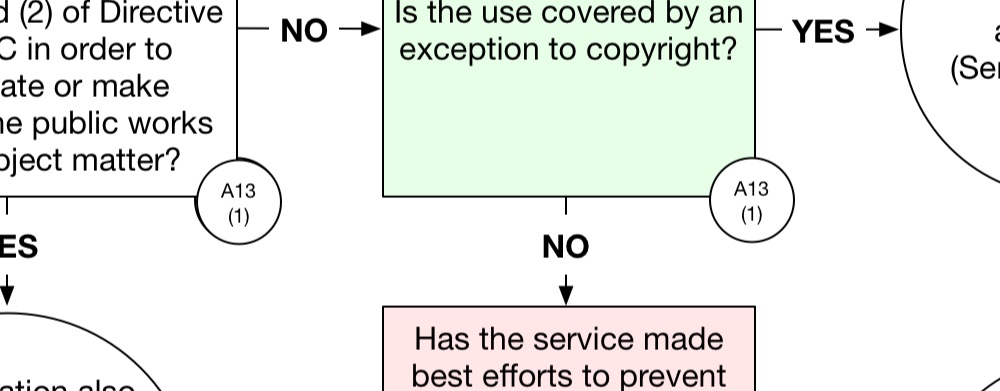

Commission proposal: Simple language that creates a legal mess with lots of uncertainties.

Compared to the other two versions the Commission’s proposal is a thing of beauty. The article consists of three relatively concise paragraphs which results in a relatively straightforward flowchart:

It is worth noting here that the Commission version of Article 13 manages to be so simple because it attempts to hide some of the most consequential provisions in Recital 38 of the text. Recital 38 contains language that attempts to redefine the activities of platforms that allow user uploads as acts of communication to the public and in doing so to strip these platforms of the liability limitations of article 14 of the eCommerce Directive (ECD). Stripped of the liability limitations, those platforms with “large amounts” of user uploaded content need to conclude licensing agreements with rightsholders and to deploy upload filters to filter out all unlicensed content.

We have previously described the main problems with the Commission proposal in more detail. In summary, the most important problems are that it would require online platforms to filter all user uploads (even though it is clear that filters can’t distinguish between legitimate and infringing uses of content), that it creates a lot of legal uncertainty for online platforms and that it contains insufficient safeguards for users who will be limited in their freedom of creative expression. For all of these reasons we think that Article 13 should be deleted from the proposed directive.

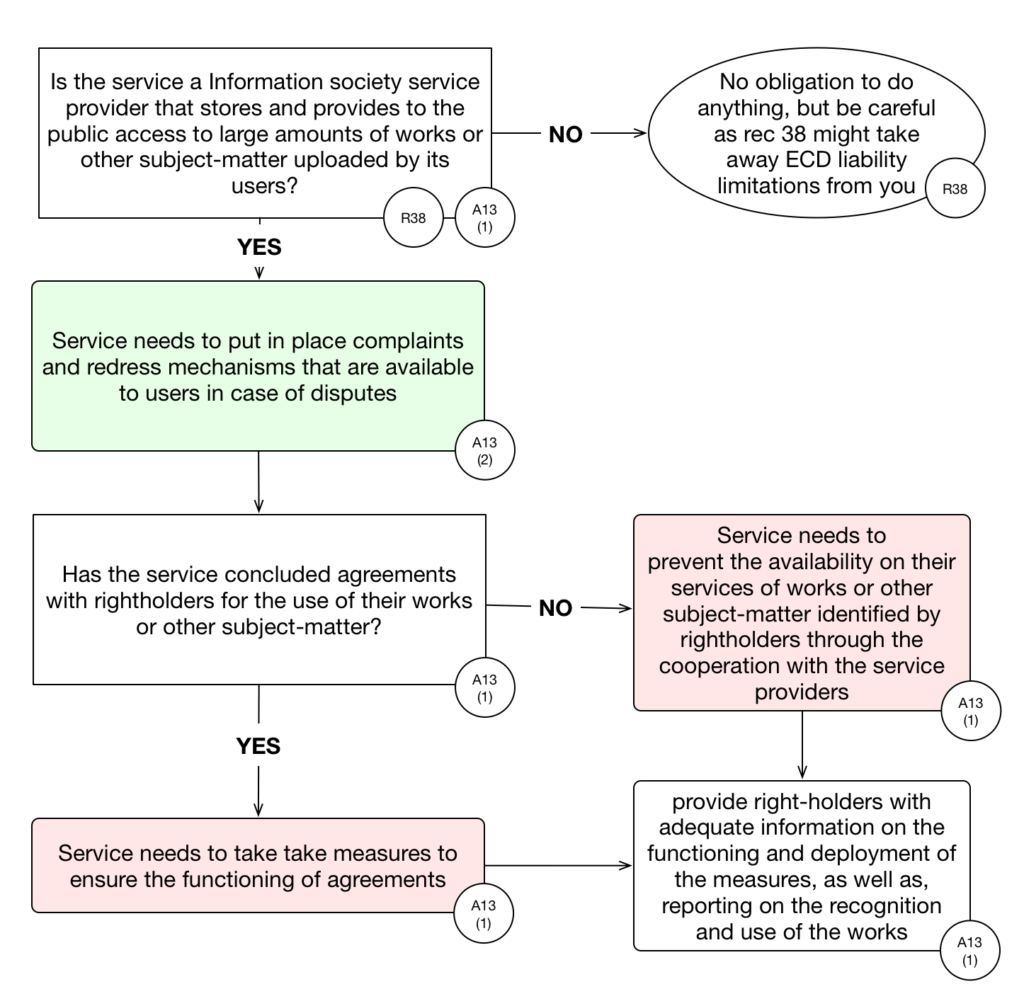

Council: a new parallel liability regime for Online Content Sharing Service Providers

The main difference between the current Council compromise proposal and the original Commission text is that the former is much more explicit about the intention of Article 13. The Council text brings a number of issues that are hidden in recital 38 of the Commission text out into the open. The result is a much longer Article (it has grown to 8 paragraphs) with a much more complex structure:

Before going into the details here it is important to highlight that the core idea of the Commission’s proposal remains unchanged: platforms that allow user uploads (in other words all open platforms) will need to conclude licensing agreements and deploy upload filters that filter all unlicensed content that has been identified by rightsholders. Where the Commission’s proposal would achieve this by creating legal uncertainty around the application of the liability limitations of the eCommerce Directive, the Council text minces no words and provides (in paragraph 3 of Article 13) that the ECD liability limitations do not apply to Online Content Sharing Service Providers (OCSSPs – their fancy term for open platforms).

The text further requires OCSSPs to obtain licenses from rightsholders and to filter out all content that has been identified by rightsholders and subsequently to ensure that once filtered out it remains unavailable. As such, the Council’s text replaces the notice and take down approach of the ECD with a licensing requirement coupled with a notice and stay down approach. Where the notices given under the ECD regime need to identify infringing uses of a protected work, these new Article 13 notices are simple claims that a work is owned by a rights holder regardless of whether the use is infringing or not.

This will have a substantial effect on the freedom of creative expression online (more stuff will be taken down and filtered at upload). The fact that, in an act of wishful thinking, the Council text requires Member States to ensure that the filtering measures do not negatively affect the user rights granted under exception and limitations, does not change this. Filtering technology is simply not capable of detecting when a use is covered by an exception and when it is not.

The other big change made by the Council text is the introduction (in article 2) of a definition of the services (the above mentioned Online Content Sharing Service Providers) that will need to comply with the obligations established in Article 13. While this introduces some more clarity than the Commissions ‘services that share “large amounts” of content’ criterium, it still creates more harm than good.

As we have argued in more detail before it is pretty much impossible to define a specific set of services by describing how they interact with copyright without having that definition apply to nearly any open platform. The Council’s text contains a set of exceptions that apply to a range of not-for-profit online platforms, such as Wikipedia, but even with these in place it is destined to cause a lot of collateral damage far beyond the types of platforms that are the real target of Article 13. To please the content industry the Member States are clearly willing to put large parts of the European digital economy into jeopardy.

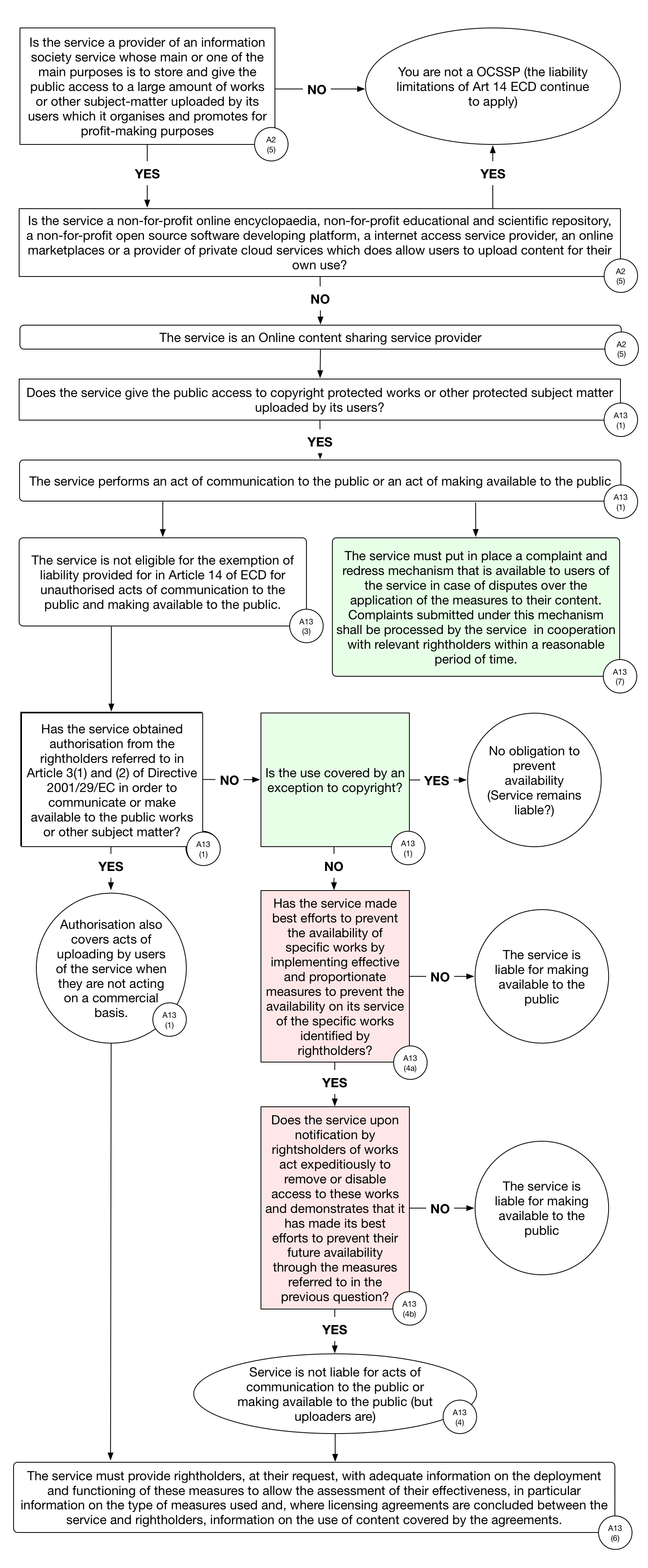

European Parliament: Filtering obligations with a healthy dose of wishful thinking

The current European Parliament compromise is very similar in structure to the Council text and mirrors the Council’s approach to define the services (also referred as OCSSPs) that need to comply with the provisions established by Article 13. With regard to the actual provisions of the article it lacks some of the clarity of the Council’s text (it largely stays away from the question of whether OCSSPs are covered by the ECD liability limitations or not) while keeping the core of the Commission’s proposal (open platforms need to obtain licenses and deploy upload filters) intact. The result looks rather messy:

What the Parliament text lacks in legal clarity it tries to compensate by way of wishful thinking. In response to the widespread criticism of the Commission proposal from civil society groups, technology companies and academics, a number of provisions have been added to the text that, on the surface, seem to protect the rights of users.

On closer inspection these additions attempt to will the impossible into existence: similar to the Council’s version, the Parliament text requires that the upload filters must ensure “the non-availability of copyright or related-right infringing works or other subject-matter [on the platforms], while non-infringing works and other subject matter must remain available”. This is of course something that filters cannot achieve, and in practice this provision will not prevent that legitimate uses of content will be filtered out on a large scale.

In similar expressions of wishful thinking the Parliament’s text requires Member States to ensure that the “implementation of [the filtering measures] shall be proportionate and strike a balance between the fundamental rights of users and rightholders and shall not impose a general obligation on OCSSPs to monitor the information which they transmit or store”.

Instead of confronting the fact that filtering technology is not suited to distinguish between legitimate acts of cultural expression and copyright infringement, the Parliament seems to have chosen to saddle up the Member States with the impossible task of ensuring that upload filters respect user rights. This is not only a cowardly act of symbolpolitik but also a complete failure of Europe’s elected representatives to stand up for the interests of internet users in the EU.

Looking ahead: deletion remains the only (sensible) option

After more than one and a half years neither the Council nor the Parliament have found a way of fixing the problems of the Commission’s original proposal. Of the two approaches the Council’s plan is the more honest one as it does not hide what it is trying to achieve. But neither the Council’s nor the Parliament’s approach manage to tailor the filtering and licensing requirements in such a way that they will only affect their intended targets (large commercial content sharing platforms with advertising based business models).

To anyone paying close attention this does not come as a surprise: In a world where open online platforms for sharing copyrighted content (other than music and video) are central to the digital economy, modifying the copyright rules to the benefit of a small class of rightsholders will have adverse effects throughout the digital economy. The only way to prevent these is to delete Article 13 from the directive and to address the question of remuneration for the use of creative content by open platforms in a context that is separate from general copyright policy making.

A note on the flowcharts: These have been buildt based on the latest version of the respective texts and illustrate the most important operative provisions of these texts. In order to keep them manageable we have ignored some of the details. In each flowchart we have highlighted the actual filtering provisions in light red and safeguards for user rights in light green. If you have any feedback or questions related to the flowcharts feel free to leave a comment below or mail us at communia@communia-association.org.